Kristin Marie Bivens

Institute of Social and Preventive Medicine, University of Bern,

Switzerland, kristin.bivens@ispm.unibe.ch,

and Harold Washington College — One of the City Colleges of Chicago

kbivens@ccc.edu

Candice A. Welhausen

Auburn University

caw0103@auburn.edu

Download the article in PDF format

INTRODUCTION

This article uses a hybrid card sorting-affinity diagramming method to design cognitively scaffolded learning for undergraduate student researchers and address two-year college (2YC) research opportunity inequities. In Technical and Professional Communication (TPC), recent curricular and programmatic research demonstrates that research skills are crucial and desired. For example, Ford and Newmark (2011) included “effectively conduct and communicate research” as an additional component to senior capstone projects (p. 312), and Ilyasova and Bridgeford (2014) incorporated research as one of their five suggested programmatic outcome categories. Further, based on their identification of existing foundational (i.e., rhetoric, writing, technology, and design) and important, yet secondary (i.e., ethics, research, collaboration, and professionalization) undergraduate TPC programmatic learning outcomes for students, Clegg et al. (2020) recommended that TPC programmatic administrators emphasize these secondary areas, such as research, because they are “. . . necessary building blocks for students’ future success” (p. 27). In their explanation, they noted that “research allows students to locate and/or produce information, assess its relevance, and apply the information to address a problem or issue” (Clegg et al., 2020, p. 10). Such programmatic concerns remain important, while the more recent “social justice turn” in TPC (Walton et al., 2019), which advocates an active “social justice stance” (Jones, 2016; Jones et al., 2016, p. 211), presents the opportunity to consider how we might approach these curricular considerations in order to empower marginalized (and minoritized) groups (Jones & Walton, 2018) as well as more actively pursue equity (see Colton & Holmes, 2018).

In this teaching experience report,1 we connect these two threads— TPC’s focus on undergraduate research as an important component of programmatic learning outcomes and the shift toward a social justice perspective in the field—by advocating that faculty from two- and four-year institutions work together to offer research experiences for undergraduates (REUs) specifically designed for two-year college students. Indeed, students at 2YCs are less likely than their counterparts at four-year institutions to have opportunities to participate in an REU, which are generally thought to be valuable learning experiences (Dillon, 2020; Stanford et al., 2017). Historically, 2YCs are also more likely than four-year institutions to teach minority and generally underserved student populations. In fact, according to recent demographic data from the American Association of Community Colleges (AACC) (2020), for credit earning students, 26% are Hispanic, 13% are Black, 6% are Asian/ Pacific Islander, 1% are Native American, 4% are multiracial, 4% are other/unknown, and 2% are “nonresident alien” (n.p.). These demographics show that 56% of students at 2YCs are either Black, Indigenous, or people of color (BIPOC). To contextualize, according to the most recent (2019–2020) integrated postsecondary education data for the minority-serving and Hispanic-serving institution and 2YC where the first author, Bivens, is affiliated, 85% of students are BIPOC (U.S. Department of Education, 2021a and 2021b).

We suggest that this research opportunity inequity for REUs can be addressed through partnerships between two- and four-year institutions such as ours, which we discuss in this report, and can serve as socially just pedagogical action meant to provide momentum toward an enduring, racially equitable shift in REUs in TPC. To illustrate how such partnerships might be put into practice, we describe a funded research project2 wherein we hired two undergraduate students from a 2YC institution as research assistants (RAs).3 More specifically, this project focused on analyzing user-generated content (UGC) or review comments for a civilian first responder app (see Welhausen & Bivens, in press-a, in press-b) and also serves as an example of how cognitive scaffolding—or intentionally sequencing student learning activities to build upon previous learning and knowledge—can be used to support students in REUs in TPC.

To address REU access equity, we designed this portion4 of our research project specifically so that we could work with 2YC students. Furthermore, because our project was funded, we hired and paid the two RAs for their labor. The cognitively scaffolded instructional approach we employed showed the project RAs one method for conducting content analysis. Whether in the classroom or workplace, this communication design method could be used as an example for conducting content analysis or as a model in any learning or training context. To prepare the project RAs for this research experience, our process included a hybrid research method of (1) open5 card sorting and (2) affinity diagramming as a pedagogical approach to cognitively sequence the steps in analyzing the review comments we collected. Our full methodological approach is outlined in detail in a forthcoming publication (see Welhausen & Bivens, in press-b).

Framed in the value of REUs in TPC and as part of a research experience at a 2YC, in what follows, we explain the scholarship that informed our cognitive scaffolding instructional approach, track that approach onto our hybrid research method, discuss the potential benefits of researching with undergraduates at 2YCs, and promote REUs at 2YCs as a move toward more equitably offering research experiences to undergraduates. First, we frame our experience report by briefly reviewing the role of research in undergraduate learning and the importance of scaffolding students’ learning to safely promote cognitive leaps. We then share the hybrid data analysis method we used. Next, we provide relevant context for our experience report before we share our pedagogical approach. We suggest that our approach allowed us to more easily design sequenced activities to build toward the higher-order thinking skills (e.g., evaluating review comments and creating categories) that the RAs would need to conduct the content analysis portion of the project. Finally, we discuss the pedagogical benefits of our approach for learners, and we conclude by arguing that these benefits can address the inequity in REU opportunities.

RESEARCH IN UNDERGRADUATE LEARNING AND TWO-YEAR COLLEGE CONTEXTS

The academic and anticipated professional benefits of REUs in science, technology, engineering, and math are well established and widely known (see Bangera & Brownwell, 2014). In contrast, in the social sciences, REUs have been called research-based learning (RBL) and have been defined as “students conduct[ing] their own research with the help of a supervisor” (Wessels et al., 2020, p. 2) or simply described as undergraduate research (Haeger et al., 2020). Regardless of the term, it is likely that these research experiences vary in each disciplinary context. Yet they are similar in that students investigate some kind of empirical phenomenon with the primary objective being “to provide [them] with an opportunity to experience participation in research,” as Wessels et al. (2020) put it (p. 1).

Whether at a two- or four-year institution, REUs are valuable for learners (Dillon, 2020; Stanford et al., 2017) even if “community colleges [face] unique challenges” in implementing REUs (Bock & Hewlett, 2018), which, at least anecdotally, we know is the case. To address these unique challenges, Schuster (2018) provided a list of high-impact practices from the AACC (p. 276). Along with capstone courses or projects and common intellectual experiences like those experienced in learning communities, the list also included undergraduate research. Schuster’s (2018) motivation derived from his argument about “the importance of starting undergraduate research when students [who] are still within their first two-years [sic] of college in general and when they are at two-year colleges in particular” (p. 277). Although 2YCs “are not [traditionally] seen as institutions where faculty members and students are engaged in scholarly research and the production of knowledge . . . undergraduate research is being conducted in community colleges [2YC] across the nation” (Boggs, 2009, pp. v–vi). However, as Martin and Rose (2005) explained, “Context is important—not just for the texts we study but also for the research we undertake” (p. 251). We contend that the academic and professional value students derive from research-based learning experiences—funded or unfunded at two- or four-year institutions—depends wholly upon the careful pedagogical research experience design (e.g., cognitive scaffolding) and the actual real-world context (such as challenges brought on by the COVID-19 pandemic) of the research experiences themselves. These factors work in tandem either for or against the quality of the research experience and its value for students. As an example of an active contribution toward a socially just pedagogical practice in TPC (Jones, 2016) that encourages an equitable distribution of research opportunities, providing REUs for 2YC students through the type of partnership we propose is a move toward what we hope will be a pronounced and enduring equity shift in access to research opportunities for 2YC students.

RESEARCH TEAM CONTEXT

Typically, the kinds of REUs in which Bivens has participated with students at a 2YC took place in independent study courses or National Science Foundation–funded science courses with communication components. However, at the RA’s institution, due to budgetary restrictions, independent study opportunities—unless they directly result in or contribute to an academic credential (i.e., degree or certificate)—are not currently available for students. At the same time, with generous funding through an early career grant from the Special Interest Group on Design of Communication (SIGDOC), the portion of the research project described in this report included a team—comprised of two faculty members (Bivens and Welhausen) and two project RAs (both from a 2YC)— and provided compensation for the project RAs’ labor. Previously, Bivens worked with the RAs6 on another extended research project examining TPC curricula and programs at 2YCs7 (see Bivens et al., 2020a, 2020b). For these reasons, the partnership we describe, including working with these RAs, was the most convenient research team configuration for this project.

In order to acquaint Welhausen with the RAs and vice versa, we convened in May 2019 (Meeting 1) in a conference room at the Newberry Library8 in Chicago, Illinois,9 to explain the scope of the research project and to field questions from the RAs. During this initial research team meeting, all research team members had the opportunity to talk informally and ask questions related to the project. For example, since the RAs were nearing graduation (Spring 2020), they were encouraged to ask questions about Welhausen’s four-year institution. Then, later that year in November 2019 (Meeting 2), the entire research team met again at RA’s institution for a day-long research meeting to discuss, define, and practice analyzing the project’s dataset (see Welhausen & Bivens, in press-a); basically, this second meeting was a formal training session.

However, prior to Meeting 2, the RAs met separately with Bivens to receive hard copy printouts of the nearly 500 mHealth app review comments that comprised our UGC dataset for analysis and the open card sorting materials (e.g., notecards, markers, and envelopes). During this meeting, which was held in Bivens’s office on a 2YC campus, Bivens asked the RAs about their current respective workloads (it was just past the midterm of the semester; both were employed part-time and enrolled in full-time studies) and briefly described card sorting and affinity diagramming. Later, the RAs were emailed instructions (Appendix A) regarding open card sorting and background readings about card sorting and affinity diagramming. The RAs were encouraged to report any issues related to understanding the card sorting and affinity diagramming content readings. Each research team member completed open card sorting with the comments to become familiar with their content and to independently create preliminary or practice codes prior to Meeting 2. Through the at-home independent card sorting and later through the affinity diagramming process, the RAs demonstrated their understanding of these usability research methods. The starting point of Meeting 2 was a full research team discussion about the preliminary coding from the independent open card sorting, which was one of the cognitively scaffolded learning activities we integrated into the research and analysis10 process for the RAs.

SCAFFOLDING TO HELP STUDENT RESEARCHERS MAKE COGNITIVE LEAPS DURING MEETING 2

In her work examining an experienced writing tutor’s verbal and nonverbal work with students in a university writing center, Thompson (2009) reviewed the pedagogical origin of the term scaffolding, noting that it was initially used in the 1970s by Bruner and colleagues; then it was taken up by Vygotsky regarding infant language acquisition (p. 418). Cognitive scaffolding (see also Cromley & Azevedo, 2005) is a pedagogical concept or learning practice that encompasses different kinds of instructional moves made to help a student or any learner solve a problem on their own. Thompson defined cognitive scaffolding as “lead[ing] and support[ing] the student in making correct and useful responses” and motivational scaffolding as “provid[ing] feedback and help[ing] maintain focus on the task and motivation” (p. 417). Although the distinctions between these kinds of scaffolding are useful, this teaching experience report focuses on the method we used to cognitively sequence learning activities. To do so, we used open card sorting and affinity diagramming as a hybrid method composed of two learning activities. These learning activities broke down the content analysis into two parts that first allowed the RAs to get to know the reviewer content, then to evaluate it. By doing this, we sequenced opportunities for knowledge building through the learning activities (i.e., open card sorting and affinity diagramming) during the learning process to prepare for content analysis. For example, the cognitive scaffolding we designed presented an opportunity to blend two methods commonly used in usability research—open card sorting and affinity diagramming— to gently lead and support the RAs in the cognitive work required to analyze the dataset.

Grady (2006) discussed scaffolding within the context of online pedagogy as a strategy used to “help learners span a cognitive gap or leap a learning hurdle” (p. 148; see also Grady & Davis, 2005/2017). Meeting 2 was designed as a training workshop (with breakfast and lunch served). Our intention for the tasks assigned prior to Meeting 2 was two-fold: to introduce card sorting and affinity diagramming via content readings and to provide a lowstakes at-home learning activity to practice open card sorting. We reasoned that the open card sorting would prepare the RAs for affinity diagramming (the major task of Meeting 2), which was the preparatory learning activity preceding the independent content analysis aimed at evaluating the review comments in order to create categories. In this way, we wanted to lead and support (Thompson, 2009, p. 417) the RAs as they became acquainted with and understood the content of the review comments. Then, as they engaged in this process, they also practiced the skills needed to evaluate and analyze that content. In this way, and as shown in Figure 1, the RA’s learning process was supported via the cognitive scaffolding through card sorting and affinity diagramming content readings, the low-stakes open card sorting learning activity, and the discussions during Meetings 1 and 2. Furthermore, we certainly used motivational scaffolding by providing encouragement and guidance, supportive feedback, and training during each meeting.

BUILDING CONTENT ANALYSIS SKILLS THROUGH OPEN CARD SORTING AND AFFINITY DIAGRAMMING

In TPC, content analysis can either be qualitative (e.g., Geisler, 2018) or quantitative (e.g., Brumberger & Lauer, 2015) and requires familiarity with the text in order to identify and define analytical categories before developing the conditions or rules that dictate how to code content. Essentially, content analysis requires making decisions about the level of analysis and how many categories to code for, as well as how to value the occurrence of a code and its frequency. Without making these decisions, content analysis can be difficult and overwhelming, especially for novice researchers or practitioners in any kind of learning or training context.

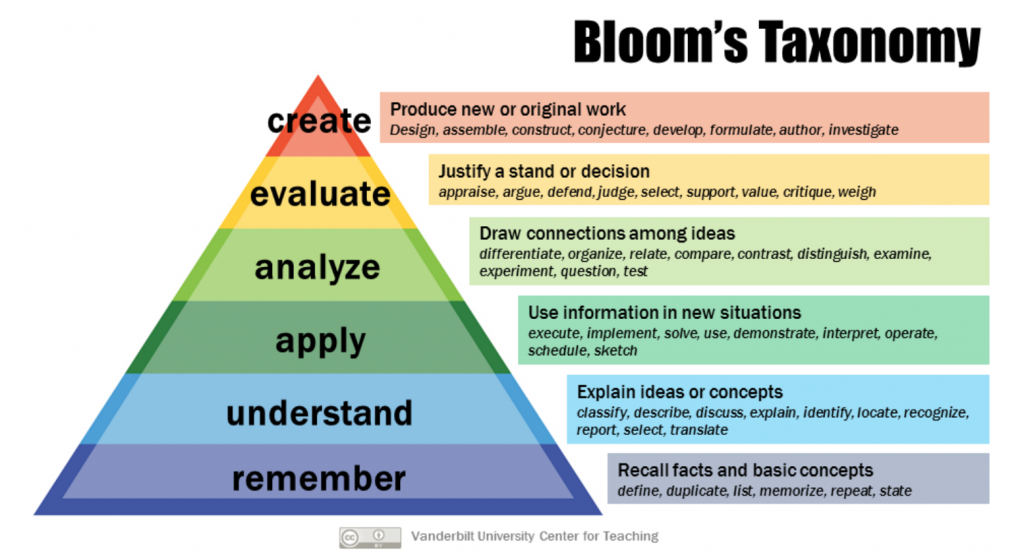

We designed the learning activities (e.g., content readings and open card sorting) to achieve the ultimate goal of our research project: to analyze the review comments. Moving backwards from that goal, we cognitively scaffolded each learning activity by using the revised version of Bloom’s taxonomy of learning (see Figure 2). By doing so, we sequenced each activity, such as the assigned content readings about card sorting and the at-home independent open card sorting, to provide a learning opportunity for the RAs to move from one revised Bloom’s taxonomy level to the next, which provided an opportunity for the RAs to practice the skills that make up content analysis (i.e., coding, categorizing, and evaluating). To illustrate, by first understanding open card sorting, the RAs could then progress to the next level of applying this understanding by completing the at-home independent open card sorting of the review comments. In other words, the card sorting content readings prepared the RAs to move from understanding card sorting to applying or duplicating that understanding to then open card sorting the review comments.

(Note. From Bloom’s Taxonomy [Graphic], by Center for Teaching, Vanderbilt University, 2016, Flickr.

[https://www.flickr.com/photos/vandycft/29428436431). CC BY 2.0.]

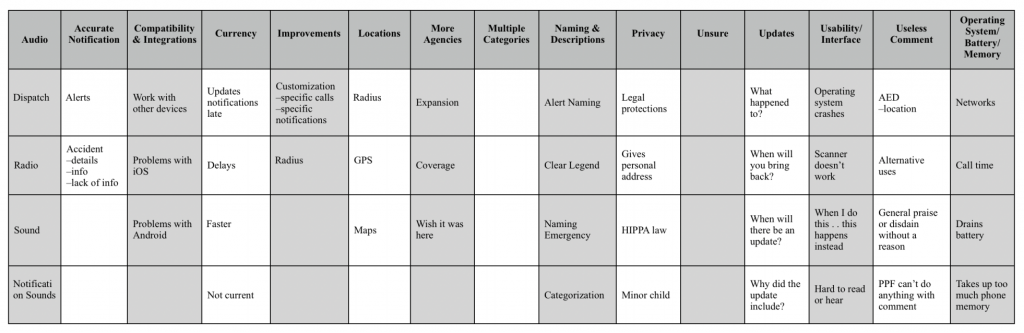

After the affinity diagramming, team members were able to question the valuation of other team members’ placements, defend their own valuations, and review comment placements within each category. From there, we worked with the RAs to create (the revised Bloom’s taxonomy of learning’s highest cognitive process) categories for the review comments as shown in Table 1 below. In the process, team members drew from their appraisals from their at home independent card sorting categorization experiences. These experiences included noting the affinity diagramming similarities or differences. Ultimately, those similarities and differences indicated the categorization for the review comments.

Since content analysis also requires making decisions about how categories will be defined or described (see evaluate and create in the revised Bloom’s taxonomic terms from Figure 2) so they reliably suit the content’s codes, an additional motivation for the at-home independent open card sorting was the lower-stakes experience of practicing grouping the review comments and defining categories in different ways prior to sharing the open card sorting results at Meeting 2. For example, both RAs were allocated at least 10 days to become familiar with the review comments and create and define preliminary categories prior to Meeting 2. To show the value of their labor and this process, they tracked their RA hours and were paid for their work, which we think also offered an additional motivation. For their at-home independent open card sorting, they had to develop rules for coding content in order to make decisions about what to do with nonsubstantive, unactionable, or otherwise unusable review comments. This work became the starting place for Meeting 2. The above-mentioned at-home independent open card sorting groundwork and the affinity diagramming during Meeting 2 were necessities prior to the RAs independently coding the review comments at home after Meeting 2. The low-stakes at-home independent open card sorting was a precursor to and practice for both the affinity diagramming during Meeting 2 and the formal at-home independent content analysis after Meeting 2. In other words, the low-stakes at-home open card sorting when coupled with the affinity diagramming were prerequisite learning activities. In fact, the affinity diagramming process was informal content analysis practice, which the RAs eventually repeated at home, post–Meeting 2. By completing these learning activities, we cognitively scaffolded the RA’s learning from review comment familiarity to understand to eventually analyze and then to formally evaluate the review comments.

The purpose of the affinity diagramming was to visualize the categorization of each review comment, ensuring a supportive environment where we could eventually discuss problematic or confounding comments from the dataset. After our preliminary coding of the comments during affinity diagramming, we talked about the emerging preliminary categories, collapsing some narrower categories into broader ones and deciding what to do if a research team member was unsure where a comment belonged. In addition to visualizing the content analysis process through affinity diagramming, the aim was to address any potential kerfuffle, discuss the remedy, and code the comments consistently. Furthermore, the affinity diagramming was the last in-person supportive training prior to the RA’s analysis of the review comments at home. In other words, in our REU design, Meeting 2 was the final formal, cognitively scaffolded learning activity, training session, and preparatory step before the RAs independently conducted the content analysis. Prior to closing Meeting 2, the categories were finalized and recorded, and we were confident that we had guided the RAs through the cognitive sequencing necessary to independently analyze the review comments. Based on the progress made in Meeting 2, including the affinity diagramming process, results, and discussions, we then shared data coding instructions (Appendix B) via email, described the content analysis process as a reminder, and disseminated the agreed-upon categories and example codes created during Meeting 2.

PEDAGOGICAL BENEFITS OF RESEARCHING WITH UNDERGRADUATES AT TWO-YEAR COLLEGES

Recently, a tweet by professor and writer David Bowles (2020) reminded readers that historically, education—from Aristotle through the next 2,500 years or so—was intended for the elite, and only in the last 150 years has education been offered to the public. He noted that “no human society had ever attempted to formally educate the entire populace,” and he described contemporary public education as “smack-dab in the middle of the largest experiment on children ever done.” For any educator, an awareness of the relative newness of public education within the context of the history of humankind might be a sobering, humbling thought. In fact, it might put any instructional design or pedagogical method, like using the revised Bloom’s taxonomy of learning to design REUs, into question. In fact, Bloom’s original taxonomy of learning was published in 1956, then revised in 2001. We would hypothesize that as the design of learning in communication contexts with regard to REUs in TPC is better understood, perhaps part of Bloom’s taxonomy—usually used in K–12 educational settings—might not directly correlate to learning contexts in higher education (or even K–12). More specifically, the social aspects of learning are not included in any of Bloom’s frameworks. And as learning remotely has shown us during the COVID-19 pandemic, socialization is an important (and for some, the most important) element of learning (and some instructors are more adept in supporting student learning in online learning contexts than others). As we designed this REU, we were mindful of the preeminence of socialization in learning and collaboration, which is why we met together as a research team during Meetings 1 and 2. For example, to promote socialization during Meeting 2, we provided meals, which we hoped would encourage informal exchanges and a pleasant experience. In fact, although these meetings were integral for planning, they were also sources of enrichment and motivation for us through experiencing the collaborative joy of participating in cross-institutional research and working on a research team—unforeseeable, valuable, and affective project outcomes outside of our design of these learning activities and research experiences in general.

In tandem with TPC scholars’ calls to assess and prioritize research for undergraduates (Ford & Newmark, 2011; Ilyasova & Bridgeford, 2014; Clegg et al., 2020), we suggest that partnerships, like the project we undertook, be implemented across two- and four-year institutions. Out of 1,235 public and private not-for-profit 2YCs, 990 2YCs offer at least 1 TPC course, which is 80% of these schools (Bivens et al., 2020a, 2020b). In their content analysis of these 1,235 2YCs, Bivens et al. (2020a, 2020b) created a state-by-state list of 2YCs offering TPC curricula. Using this list as a starting point to institutionally locate potential collaborators and form partnerships, we advocate that faculty from four-year institutions work with faculty from 2YC feeder schools—the schools from which 2YC transfer students primarily matriculate—to design cross-institutional, context-specific research and learning experiences for undergraduate students from both two- and four-year institutions. By doing so, these TPC instructors can intentionally contribute to efforts to address research opportunity inequities.

| Revised Bloom’s Taxonomy Verbs | Description | Cognitively Scaffolded Learning Outcome |

|---|---|---|

| Create | Produce new or original work. | —developed independent content analysis of review comments —constructed categories through affinity diagramming —assembled review comments into preliminary categories through open card sorting |

| Evaluate | Justify a stand or decision. | —defended reviewer comment category placement post–affinity diagramming —valued review comments via affinity diagramming —appraised review comments through open card sorting |

| Analyze | Draw connections among ideas. | —questioned the categorizations of others’ post–affinity diagramming —related similarities among review comments during affinity diagramming —organized review comments into categories during open card sorting |

| Apply | Use information in new situations. | —implemented card sorting content readings —implemented affinity diagramming content readings —demonstrated card sorting and affinity diagramming knowledge |

| Understand | Explain ideas or concepts. | —reported issues related to card sorting and affinity diagramming content readings —discussed open card sorting process at outset of Meeting 2 —identified and selected relevant card sorting and affinity diagramming knowledge for future use |

| Remember | Recall facts and basic concepts. | —repeated affinity diagramming process after training in order to conduct content analysis —duplicated open card sorting method process independently based on content readings —memorized open card sorting and affinity diagramming processes |

CONCLUSION

In closing, this teaching experience report describes the process we used to conduct research with two project RAs at a 2YC. Through our discussion, we also demonstrated how to cognitively scaffold and design learning activities ranging from at-home, lower-stakes open card sorting to in-person, higher-stakes affinity diagramming that prepared these project RAs to analyze content independently and consistently. Our example provides a model for students and practitioners alike to learn about content analysis through the hybrid open card sorting-affinity diagramming method (as previously noted, see Welhausen & Bivens [in press-b] for more detail about our process), which can be applied to other learning and/or training contexts involving content analysis. At the same time, we also advocate for creating and sharing (paid) TPC research opportunities or REUs for students at 2YCs like the one we describe in this report. While we recognize that because TPC instructors are often housed in English departments, obtaining funding for research in humanities-based disciplines, particularly to pay RAs, can be a significant challenge, we also propose that faculty from four-year institutions might be better positioned to procure internal and even external funding for such endeavors. Finally, we suggest that faculty at four-year institutions look for opportunities to work with colleagues at 2YCs to form partnerships like the one we describe here, incorporating as many faculty and students from two- and four-year institutions as can be sufficiently supported. As our discussion in this report shows, the design of our REU moves us toward actively pursuing research opportunity equity for undergraduates—a meaningful, feasible, and ideally enduring pedagogical action aimed to contribute to socially just and racially equitable REUs in TPC that we wholeheartedly endorse.

ACKNOWLEDGEMENTS

We gratefully acknowledge Gustav Karl Henrik Wiberg’s work retrieving the iOS user comments and his troubleshooting attempts to gather as many comments as possible. Further, we are thankful for the PulsePoint Foundation’s willingness to correspond with us regarding our project. In addition to the critical and helpful reviewer feedback, we also appreciate Allison Boman’s and the CDQ team’s editorial assistance during the publication cycle for this article. Please see the article’s endnotes for other acknowledgments.

ENDNOTES

- The Institutional Review Board for the City Colleges of Chicago reviewed this project and found it to be exempt.

- We are grateful for an early career grant from the Special Interest Group on Design of Communication, which enabled this research project.

- We also hired one RA from the four-year institution where Welhausen works, who assisted with compiling preliminary results for this project (see Welhausen & Bivens, 2019).

- As we indicate in the endonote above, some of the grant funding we received was used to hire an RA from Welhausen’s institution as we sought to include students from both of our schools for this project. Ideally, students from both of our institutions would have worked together on the different phases of this project. However, because Bivens was in Chicago, Illinois, and Welhausen teaches in Auburn, Alabama, this kind of interaction was not possible. Further, in this report we focus on the experience of working with RAs from 2YCs who are less frequently the beneficiaries of REUs.

- Closed card sorting uses predetermined categories to sort content or items, whereas open card sorting does not.

- We appreciate the RAs’ labor as research assistants on the research team.

- Bivens appreciates the Council for Programs in Technical and Scientific Communication for a grant award that funded this project.

- We appreciate the Newberry Library for their willingness to furnish a space for Meeting 1 for the research team.

- Welhausen thanks the Recognition and Development Committee in the English Department of Auburn University for providing the funding to travel to Chicago to conduct this analysis.

- For a detailed discussion of our categorization and analytical process, please see Welhausen and Bivens (in press-a).

REFERENCES

American Association of Community Colleges. (2020). Fast facts 2020. https://www.aacc.nche.edu/research-trends/fast-facts

Bangera, G., & Brownell, S. E. (2014). Course-based undergraduate research experiences can make scientific research more inclusive. CBE—Life Sciences Education, 13(4), 602–606. https://doi.org/10.1187/cbe.14-06-0099

Bivens, K. M., Elliott, T. J., & Wiberg, G. K. H. (2020a). Locating technical and professional communication at two-year institutions. Programmatic Perspectives, 11(2), 6–66.

Bivens, K. M., Elliott, T. J., & Wiberg, G. K. H. (2020b). Updating information about technical and professional communication at two-year colleges. Teaching English in the Two-Year College, 48(2), 200–214.

Bock, H., & Hewlett, J. (2018). Undergraduate research: Changing the culture at community colleges. Scholarship and Practice of Undergraduate Research, 1(4), 49–50. https://doi.org/10.18833/spur/1/4/5

Boggs, G. (2009). Preface. In B. D. Cedja & N. Hensel (Eds.), Undergraduate research at community colleges. Council on Undergraduate Research. https://www.cur.org/assets/1/7/Undergraduate_Research_at_Community_Colleges_-_Full_Text_-_Final.pdf

Bowles, D. [@DavidOBowles]. (2020, October 5). I’ll let you in on a secret. I have a doctorate in education, but the field’s basically just a 100 years old. We don’t really know what we’re doing. Our scholarly understanding of how learning happens is like astronomy 2000. [Tweet]. Twitter. https://twitter.com/DavidOBowles/status/1313246219905437701

Brumberger, E., & Lauer, C. (2015). The evolution of technical communication: An analysis of industry job postings. Technical Communication, 62(4), 224–243.

Center for Teaching, Vanderbilt University. (2016, September 6). Bloom’s Taxonomy [Graphic]. https://www.flickr.com/photos/vandycft/29428436431

Clegg, G., Lauer, J., Phelps, J., & Melonçon, L. (2020). 8 Communication Design Quarterly Online First, June 2021 Programmatic outcomes in undergraduate technical and professional communication programs. Technical Communication Quarterly, 30(1), 19–33. https://doi.org/10.1080/10572252.2020.1774662

Colton, J. S., & Holmes, S. (2018). A social justice theory of active equality for technical communication. Journal of Technical Writing and Communication, 48(1), 4–30. https://doi.org/10.1177/0047281616647803

Cromley, J. G., & Azevedo, R. (2005). What do reading tutors do? A naturalistic study of more or less experienced tutors in reading. Discourse Processes, 40(2), 83–113. https://doi.org/10.1207/s15326950dp4002_1

Dillon, H. E. (2020). Development of a mentoring course-based undergraduate research experience (M-CURE). Scholarship and Practice of Undergraduate Research, 3(4), 26–34.

Ford, J. D., & Newmark, J. (2011). Emphasizing research (further) in undergraduate technical communication curricula: Involving undergraduate students with an academic journal’s publication and management. Journal of Technical Writing and Communication, 41(3), 311–324. https://doi.org/10.2190/TW.41.3.f

Geisler, C. (2018). Coding for language complexity: The interplay among methodological commitments, tools, and workflow in writing research. Written Communication, 35(2), 215–249. https://doi.org/10.1177/0741088317748590

Grady, H. M. (2006, October). Instructional scaffolding for online courses. In 2006 IEEE International Professional Communication Conference (pp. 148–152). IEEE.

Grady, H. M., & Davis, M. T. (2005/2017). Teaching well online with instructional and procedural scaffolding. In K. C. Cook & K. Grant-Davie (Eds.), Online education: Global questions, local answers (pp. 101–122). Baywood.

Haeger, H., Banks, J. E., Smith, C., & Armstrong-Land, M. (2020). What we know and what we need to know about undergraduate research. Scholarship and Practice of Undergraduate Research, 3(4), 62–69.

Ilyasova, K. A., & Bridgeford, T. (2014). Establishing an outcomes statement for technical communication. In T. Bridgeford, K. S. Kitalog, & B. Williamson (Eds.), Sharing our intellectual traces: Narrative reflections from administrators of professional, technical, and scientific communication programs (pp. 53–80). Baywood.

Jones, N. N. (2016). The technical communicator as advocate: Integrating a social justice approach in technical communication. Journal of Technical Writing and Communication, 46(3), 342–361. https://doi.org/10.1177/0047281616639472

Jones, N. N., Moore, K. R., & Walton, R. (2016). Disrupting the past to disrupt the future: An antenarrative of technical communication. Technical Communication Quarterly, 25(4), 211–229. https://doi.org/10.1080/10572252.2016.1224655

Jones, N. N., & Walton, R. (2018). Using narratives to foster critical thinking about diversity and social justice. In A. M. Haas & M. F. Eble (Eds.), Key theoretical frameworks: Teaching technical communication in the twenty-first century (pp. 241–267). Utah State University Press.

Martin, J. R., & Rose, D. (2005). Designing literacy pedagogy: Scaffolding asymmetries. In J. Webster, C. Matthiessen, & R. Hasan (Eds.), Continuing discourse on language (pp. 251–280). Continuum.

Schuster, M. (2018). Undergraduate research at two-year community colleges. Journal of Political Science Education, 14(2), 276–280. https://doi.org/10.1080/15512169.2017.1411273

Stanford, J. S., Rocheleau, S. E., Smith, K. P., & Mohan, J. (2017). Early undergraduate research experiences lead to similar learning gains for STEM and non-STEM undergraduates. Studies in Higher Education, 42(1), 115– 129. https://doi.org/10.1080/03075079.2015.1035248

Thompson, I. (2009). Scaffolding in the writing center: A microanalysis of an experienced tutor’s verbal and nonverbal tutoring strategies. Written Communication, 26(4), 417–453. https://doi.org/10.1177/0741088309342364

U.S. Department of Education. (2021a). Integrated postsecondary education data system. https://nces.ed.gov/ipeds/datacenter/institutionprofile.aspx?unitId=144209&goToReportId=6

U.S. Department of Education. (2021b). College scorecard. https://collegescorecard.ed.gov/school/?144209-City_Colleges_of_Chicago-Harold_Washington_College

Walton, R., Moore, K., & Jones, N. (2019). Technical communication after the social justice turn: Building coalitions for action. Routledge.

Welhausen, C. A., & Bivens, K. M. (2019). Experience report: Using content analysis to explore users’ perceptions and experiences using a novel citizen first responder app. Proceedings of the 37th ACM International Conference on the Design of Communication, 33, 1–6. https://doi.org/10.1145/3328020.3353953

Welhausen, C. A., & Bivens, K. M. (in press-a). Civilian first responder mHealth apps, interface rhetoric, and amplified precarity. Rhetoric of Health and Medicine, 4(4).

Welhausen, C. A., & Bivens, K. M. (in press-b). mHealth apps and usability: Using user-generated content to explore users’ experiences with a civilian first responder app. Technical Communication, 68(3).

Wessels, I., Rueß, J., Gess, C., Deicke, W., & Ziegler, M. (2020). Is research-based learning effective? Evidence from a pre–post analysis in the social sciences. Studies in Higher Education, 1–15. Advance online publication. https://doi.org/10.1080/03075079.2020.1739014

ABOUT THE AUTHORS

Kristin Marie Bivens is the scientific editor for the Institute of Social and Preventive Medicine, University of Bern, Switzerland; and she is an associate professor of English at Harold Washington College — one of the City Colleges of Chicago (on leave). Her scholarship examines the circulation of information from expert to non-expert audiences in critical care contexts (e.g., intensive care units, sudden cardiac arrest, and opioid overdose) with aims to offer ameliorative suggestions to enhance communication.

Candice A. Welhausen is an assistant professor of English at Auburn University with an expertise in technical and professional communication. Before becoming an academic, she was a technical writer/editor at the University of New Mexico Health Sciences Center in the department formerly known as Epidemiology and Cancer Control. Her scholarship is situated at the intersection of technical communication, visual communication and information design, and the rhetoric of health and medicine.

APPENDIX A: PULSE POINT PROJECT PREPARATION FOR NOVEMBER 15, 2019

It was great to see you today and meet to talk about the preparatory work for November 15.

To review, 1) please read the following pages about card sorting before you begin sorting the comments:

- https://www.nngroup.com/articles/card-sorting-definition/

- https://www.usability.gov/what-and-why/glossary/cardsorting-or-card-sort.html

- https://www.usability.gov/how-to-and-tools/methods/cardsorting.html

- https://libguides.memphis.edu/c.php?g=587193&p=4068156

- https://blogs.cornell.edu/usabilitytoolkit/2019/06/04/cardsorting/

Then, please watch the following video about card sorting before you begin sorting the comments: open versus closed card sorting

After you have read and watched the sources on card sorting, then please 3) prepare to sort the cards using the open card sort method. This process includes: reading through and annotating all IOS and Android comments, cutting out those comments, and pasting them to the note cards.

After preparing to sort the cards, then please 4) sort the cards into categories. As you sort the cards, please remember that some comments/cards will not contain substantive information. Categorize those non-substantative comments/cards separate from the other categories you identify.

Once you know categories, rubber band cards in sets of 10 and place in an envelope. On the envelope, please write the name of the category. The category might be anywhere from a word to a phrase of 3-5 words.

Finally, please 5) read the following information prior to November 15:

- https://www.uxmatters.com/mt/archives/2010/09/dancingwith-the-cards-quick-and-dirty-analysis-of-card-sorting-data.php

- https://www.nngroup.com/articles/affinity-diagram/

If you have questions, or parts of these instructions are unclear, please do let me know.

APPENDIX B: SELECTIVE DATA CODING BASED ON 11/15 CATEGORIES FROM AFFINITY DIAGRAMMING

1. Carefully read and review the User Comments from column A (RA 1: comments 2-300 and RA 2: comments 301-600).

Please note that there are an additional 100 comments from 11/15. So, if some of the comments are unfamiliar, that is why.

2. Choose the appropriate code (see below) from the drop-down menu in column B (see the next page for our original list) for the User Comments.

a. Audio,

b. Accurate Notifications,

c. Compatibility & Integrations,

d. Currency,

e. Improvements,

f. Location,

g. More Agencies,

h. *Multiple Categories**,

i. Naming & Descriptions,

j. Privacy,

k. *Unsure,

l. Updates,

m. Usability/Interface,

n. *Useless Comment,

o. Operating System-Battery-Memory

Please tag (@/+) Dr. Bivens or Dr. Welhausen in comments for categories h (multiple categories), k (unsure), and n (useless comment). For **h multiple categories, please be sure to name the multiple categories in the comment, too.

3. Repeat process for all assigned User Comments.

4. When you have finished categorizing all your data, please send a message to Dr. Bivens and Dr. Welhausen. If you have questions, please do let us know